i think i just came up with a new compact data structure for variable sized integers that supports efficient search. let's say you want to encode 1000 people you follow into the social graph index using martti's encoding. you simply bucket the sorted user-id integers contiguously based on the size of its encoding. for example:

u16_offset: 6

UID(u8) * 6 -> 1,2,42,53,128,255

UID(u16) * 1000 -> 1024, 4086, 65532, ...

the header of the array simply stores offsets into the 2/4-byte array segments. if you want to check if a user id of varint encoding size 1 exists in the person follow list, you can linear scan through the 1 byte entries (probably faster than binary search for ~255 max entries)

if the userid encodes to 2 bytes, you can jump to the 2-byte offset (stored in the array header) and then binary search through that, assuming this would be the larger part of the array.

this gives you the smallest possible encoding and direct indexing... not sure if anyone has thought of this before.

I love compact and fast data structures 🤓

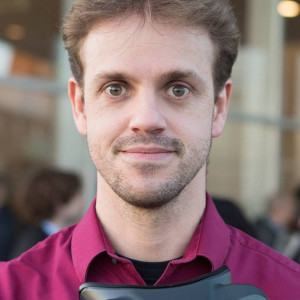

martti already added follow/WoT indices to #nostrdb, now I'm just brainstorming space optimizations!

Login to reply

Replies (14)

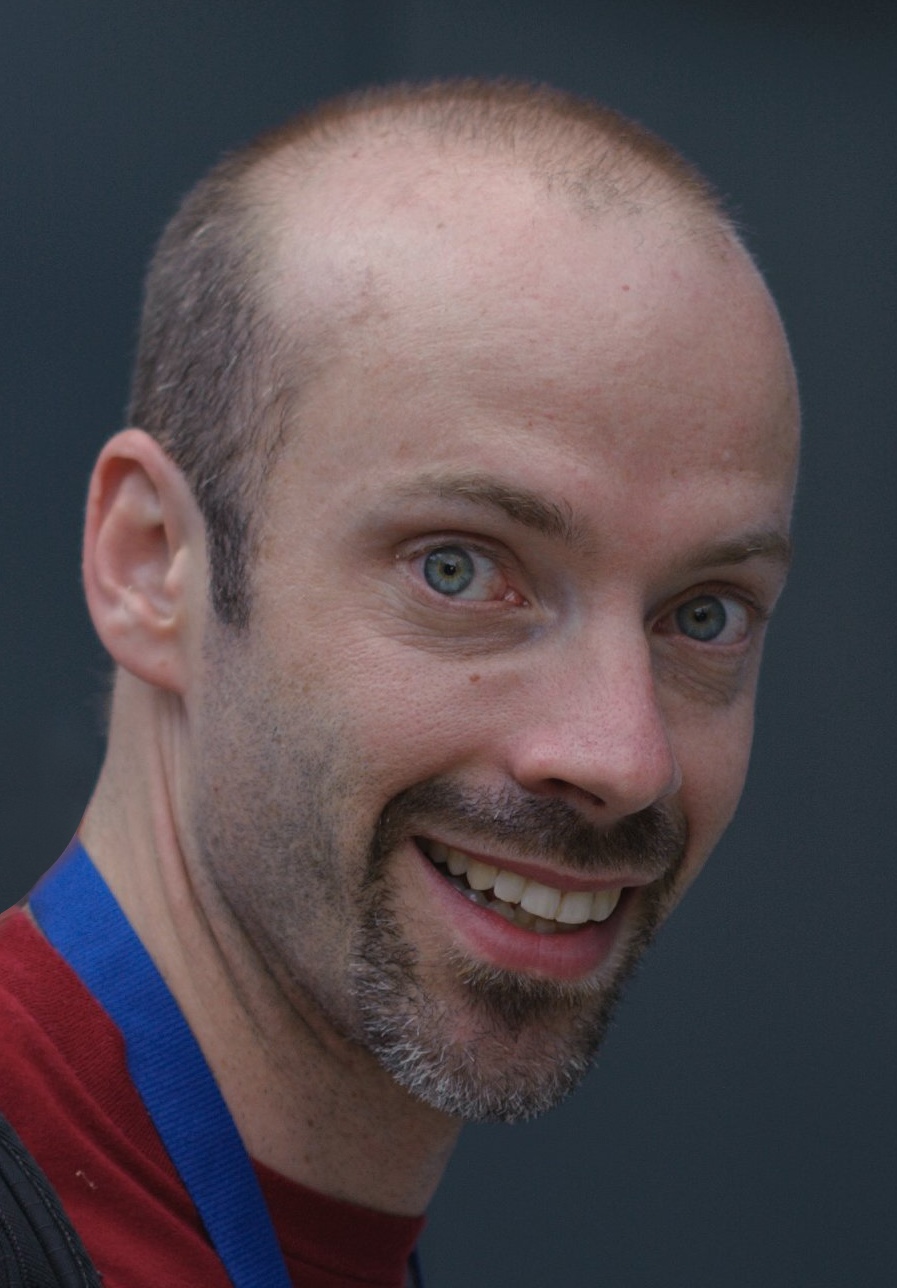

It’s the cheap wine, then.

soon

Wat. Sounds cool, bro.

Can you come up with a new compact data structure to get Bitcoin back up over $100k.

Go to luzzo pizza and pay with sats.

y u so obsessed with bitcoin

Isn't that just a database index? Are you essentially writing lots of indexes by hand on nostrdb?

lmdb is just k/v, so the index would be:

k: uid

v: varint-partitioned-array of people they follow

You could do a composite key:

k: (uid, uid) -> user, follow

but that would be horribly space inefficient

yes all of our indices are hand rolled and optimized for space

#cointards are an easy target. Endless hours of fun. 😅

Yes, it thoguht there was a name for these types of indexing data structures. I remember seeing something like that (you just store the array itself as varints) in school. That's why id locality was (maybe still is) such a big deal back in the day. I remember a paper that discussed those two alternatives in the light of write and read speeds and you would choose them based on your system being write or read centric. We even did a server mirroring setup one day on the old Oracle with one server to receive data with a write index and 3 others were read-only and using the read index. That was the first time I understood that sometimes the best option is all of them :)

I tried to get ai to give me a name for it but i don’t think there is one. I couldn’t find anywhere online describing this either. It is kind of obscure. Succinct data structures are a bit of a black art.

Yeah, I don't have my notes anymore. But there are hundreds of these inverted index compression techniques in the wild. You can see some details here:

https://jshun.csail.mit.edu/6506-s24/lectures/lecture13-2.pdf

Seems like it would only be a win if you ensure low IDs are the popular ones. And then, consider a bitmap?

the alternative is an array of 32 bit integers? There can be a large number of follows for every user you are building a follow index for. A follow list you want to be able to bsearch. I don’t see why this space savings wouldn’t add up.

Most will likely be 2 byte ints, i just don’t want to have to choose 4 bytes when most will be 2.

4 bytes * 1000 follows * 16000 users = 64 mb

vs

2 bytes * 1000 follows * 16000 users = 32 mb

i guess it comes down to if the complexity is worth it to save 32mb of storage 🤷♂️